Scikit-NeuroMSI is an open-source Python framework that simplifies the implementation of neurocomputational models of multisensory integration.

Research on the neural mechanisms underlying multisensory integration—where unisensory signals combine to produce distinct multisensory responses—has surged in recent years. Despite this progress, a unified theoretical framework for multisensory integration remains elusive. Scikit-NeuroMSI aims to bridge this gap by providing a standardized, flexible, and extensible platform for modeling multisensory integration, fostering the development of theories that connect neural and behavioral responses.

Scikit-NeuroMSI was designed to meet three fundamental requirements in the computational study of multisensory integration:

-

Modeling Standardization: Standardized interface for implementing and analyzing different types of models. The package currently handles the following model families: Maximum Likelihood Estimation, Bayesian Causal Inference, and Neural Networks.

-

Data Processing Pipeline: Multidimensional data processing across spatial dimensions (1D to 3D spatial coordinates), temporal sequences, and multiple sensory modalities (e.g., visual, auditory, touch).

-

Analysis Tools: Integrated tools for parameter sweeping across model configurations, result visualization and export, and statistical analysis of model outputs.

In addition, there is a core module with features to facilitate the implementation of new models of multisensory integration.

You need Python 3.10+ to run scikit-neuromsi.

Run the following command:

pip install scikit-neuromsior clone this repo and then inside the local directory execute:

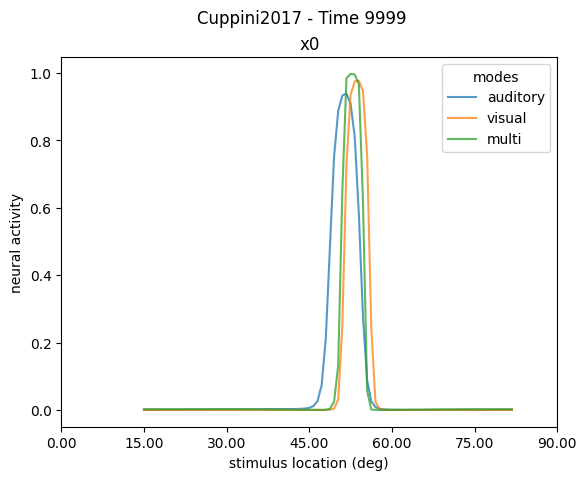

pip install -e .Simulate responses from existing models of multisensory integration (e.g. audio-visual causal inference network from Cuppini et al. (2017)):

from skneuromsi.neural import Cuppini2017

# Model setup

model_cuppini2017 = Cuppini2017(neurons=90,

position_range=(0, 90))

# Model execution

res = model_cuppini2017.run(auditory_position=35,

visual_position=52)

# Results plot

ax1 = plt.subplot()

res.plot.linep(ax=ax1)

ax1.set_ylabel("neural activity")

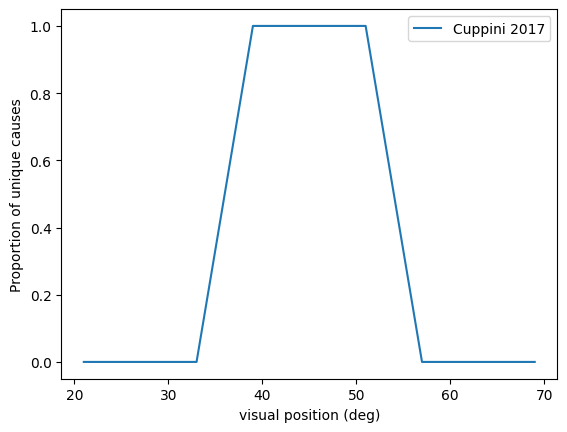

ax1.set_xlabel("stimulus location (deg)")Simulate multisensory integration experiments (e.g. causal inference under spatial disparity - "ventriloquist effect") using the parameter sweep tool:

from skneuromsi.sweep import ParameterSweep

import numpy as np

# Experiment setup

spatial_disparities = np.array([-24, -12, -6, -3, 3, 6, 12, 24])

sp_cuppini2017 = ParameterSweep(model=model_cuppini2017,

target="visual_position",

repeat=1,

range=45 + spatial_disparities)

# Experiment run

res_sp_cuppini2017 = sp_cuppini2017.run(auditory_position=45,

auditory_sigma=4.5,

visual_sigma=3.5)

# Experiment results plot

ax1 = plt.subplot()

res_sp_cuppini2017.plot(kind="unity_report", label="Cuppini 2017", ax=ax1)

ax1.set_xlabel("visual position (deg)")For more detailed examples and advanced usage, refer to the Scikit-NeuroMSI Documentation.

We welcome contributions to Scikit-NeuroMSI!

If you're a multisensory integration researcher, we encourage you to integrate your models directly into our package. If you're a software developer, we'd love your help in enhancing the overall functionality of Scikit-NeuroMSI.

For detailed information on how to contribute ideas, report bugs, or improve the codebase, please refer to our Contribuiting Guidelines.

Scikit-NeuroMSI is under The 3-Clause BSD License

This license allows unlimited redistribution for any purpose as long as its copyright notices and the license’s disclaimers of warranty are maintained.

If you want to cite Scikit-NeuroMSI, please use the following references:

Paredes, R., Cabral, J. B., & Seriès, P. (2025). Scikit-NeuroMSI: A Generalized Framework for Modeling Multisensory Integration. Neuroinformatics, 23(40), 1-21. https://doi.org/10.1007/s12021-025-09738-1

Paredes, R., Seriès, P., Cabral, J. (2023). Scikit-NeuroMSI: a Python framework for multisensory integration modelling. IX Congreso de Matematica Aplicada, Computacional e Industrial, 9, 545–548.