I am an Applied Research Scientist at AllSci Corp, where I build AI co-scientists for long-horizon scientific exploration in the life sciences. My work focuses on developing scientific reasoning systems that can decompose papers into hypotheses, research questions, and results, and combine retrieval, post-training, reinforcement learning, and evidence synthesis to support discovery.

At AllSci, I've been working on building agentic research systems for therapeutic landscape analysis, molecule due diligence, and biomedical evidence synthesis across scientific literature, drug assets, clinical trials, and hypothesis databases. This has included building workflows that analyze target biology and mechanism-of-action questions, compare safety and efficacy signals across drugs, surface sponsor/phase/status patterns in clinical-trial data, and support hypothesis discovery. I have also been developing pipelines that break biomedical papers into hypotheses, research questions, and results, along with evaluation and citation-validation systems that make scientific outputs more grounded, benchmarked, and reliable. This included building agentic deep-research systems, retrieval and evaluation pipelines over papers, trials, and hypothesis databases, and infrastructure for making scientific outputs more grounded, benchmarked, and reliable.

Broadly, my research interests lie at the intersection of Large Language Models, Representational Learning, Science of Science, Information Theory, and Uncertainty Quantification. Previously, I was a Research Scientist at the Center for Science of Science and Innovation, working with Dashun Wang at the Kellogg School of Management, Northwestern University. My doctoral study was advised by Dr. Hamed Alhoori, and my dissertation was fully funded by an NSF grant. It focused on building a predictive modeling framework to investigate the Reproducibility crisis in AI. During my Ph.D, I was a Givens Research Associate and an Argonne Leadership Computing Facility Graduate Student researcher. I have also given a workshop on using AutoML for Graph Neural Architecture Search at the ALCF DeepHyper Automated Machine Learning Workshop.

- 🔭 I’m currently working on post-training large language models, implementing scientific LLM agents.

- 👯 I’m looking to collaborate on Ways to improve agentic PeerReview for the larger scholarly ecosystem.

- 🌱 When I’m bored I explore Utility of LLM's + Graphs.

- 💬 Ask me about Large Language models, Representational Learning, Information theory, Graphs, Uncertainty Quantification, Neural Architecture Search.

- 📫 How to reach me: @akhilpandey95

- 😄 Pronouns: (he/him)

- ⚡ Fun fact: Haskell has type inference, meaning it can automatically determine the type of a data by looking at how it is created.

Pre-review to Peer review | Pitfalls of Automating Reviews using Large Language Models

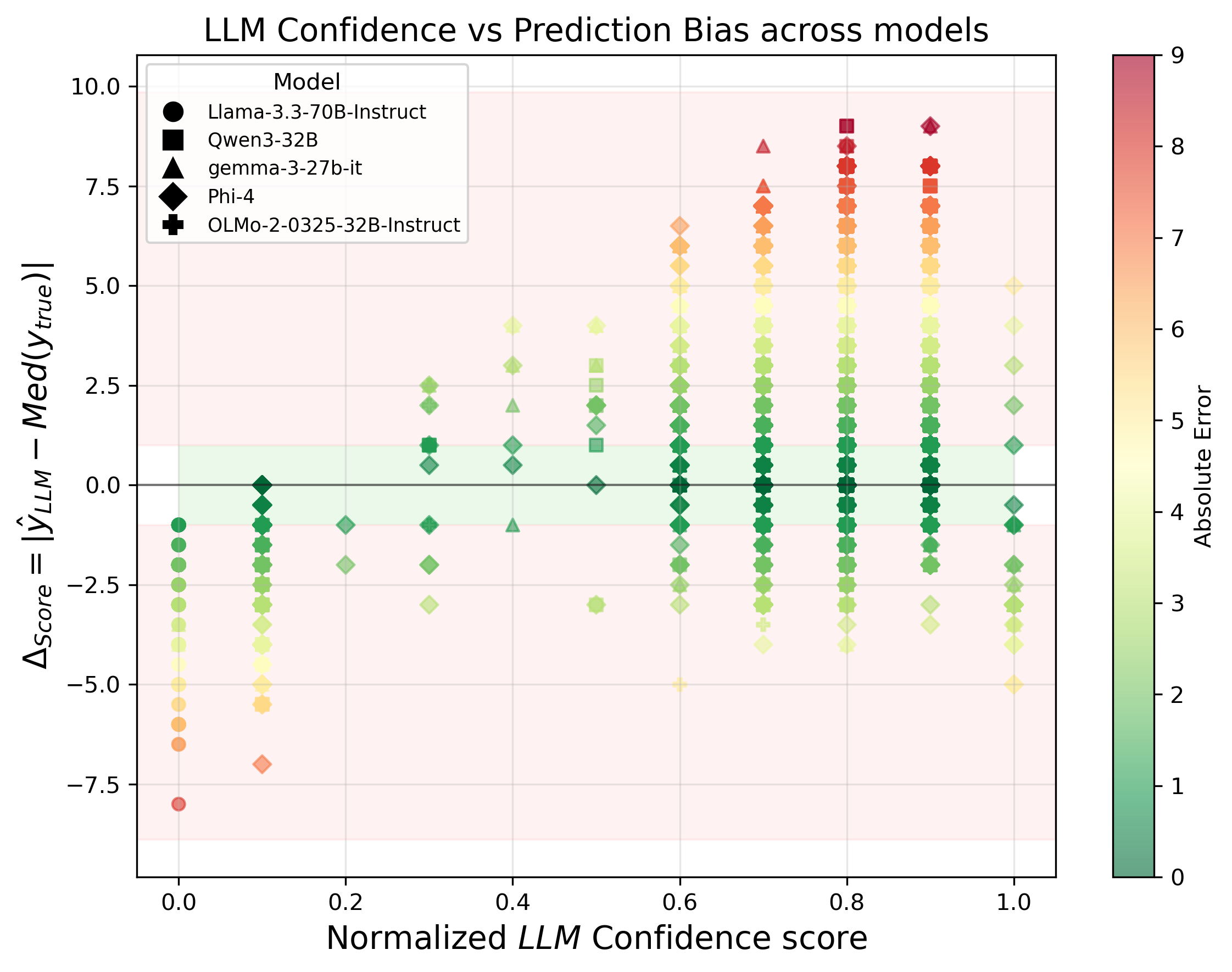

ReSci-Agent | Agentic PeerReview