Mini-SGLang supports online serving with an OpenAI-compatible API server. It provides the standard /v1/chat/completions endpoint, allowing seamless integration with existing tools and clients. For detailed command-line arguments and configuration options, run python -m minisgl --help.

For demonstration and testing purposes, an interactive shell mode is available. In this mode, users can input prompts directly, and the LLM will generate responses in real-time. The shell automatically caches chat history to maintain context. To clear the conversation history and start a new session, use the /reset command.

Example:

python -m minisgl --model "Qwen/Qwen3-0.6B" --shellTo scale performance across multiple GPUs, Mini-SGLang supports Tensor Parallelism (TP). You can enable distributed serving by specifying the number of GPUs with the --tp n argument, where n is the degree of parallelism.

Our framework currently supports the following dense model architectures:

Chunked Prefill, a technique introduced by Sarathi-Serve, is enabled by default. This feature splits long prompts into smaller chunks during the prefill phase, significantly reducing peak memory usage and preventing Out-Of-Memory (OOM) errors in long-context serving. The chunk size can be configured using --max-prefill-length n. Note that setting n to a very small value (e.g., 128) is not recommended as it may significantly degrade performance.

You can specify the page size of the system using the --page-size argument.

Mini-SGLang integrates high-performance attention kernels, including FlashAttention (fa), FlashInfer (fi) and TensorRT-LLM fmha (trtllm). It supports using different backends for the prefill and decode phases to maximize efficiency. For example, on NVIDIA Hopper GPUs, FlashAttention 3 is used for prefill and FlashInfer for decode by default.

You can specify the backend using the --attn argument. If two values are provided (e.g., --attn fa,fi), the first specifies the prefill backend and the second the decode backend. Note that some attention backend might override the user-provided page size (e.g. trtllm only supports page size 16,32,64).

To minimize CPU launch overhead during decoding, Mini-SGLang supports capturing and replaying CUDA graphs. This feature is enabled by default. The maximum batch size for CUDA graph capture can be set with --cuda-graph-max-bs n. Setting n to 0 disables this feature.

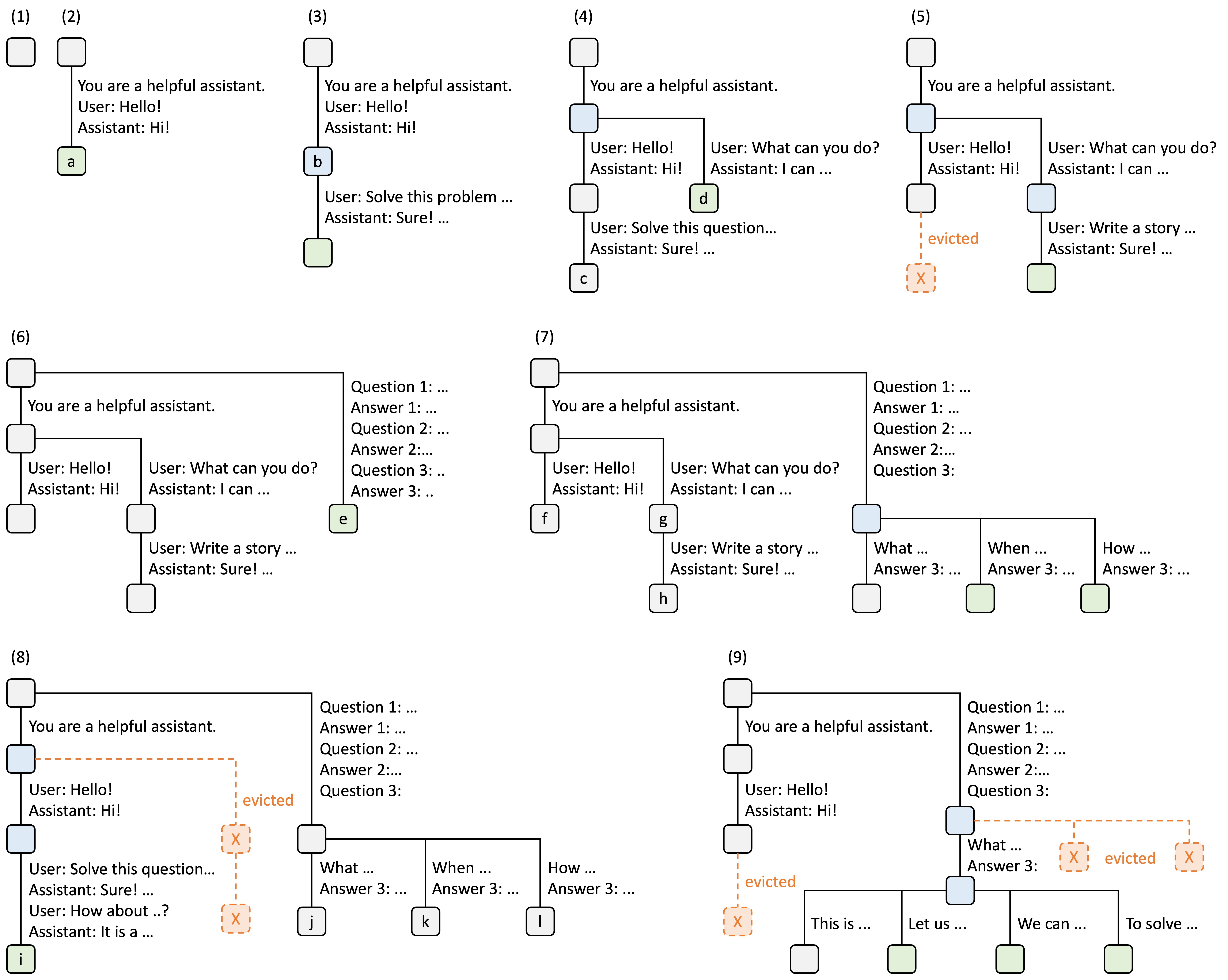

Adopting the original design from SGLang, Mini-SGLang implements a Radix Cache to manage the Key-Value (KV) cache. This allows the reuse of KV cache for shared prefixes across requests, reducing redundant computation. This feature is enabled by default but can be switched to a naive cache management strategy using --cache naive.

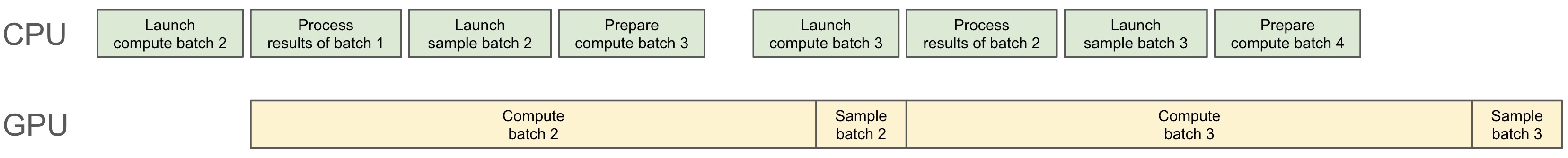

To further reduce CPU overhead, Mini-SGLang employs overlap scheduling, a technique proposed in NanoFlow. This approach overlaps the CPU scheduling overhead with GPU computation, improving overall system throughput.